DFS (distributed file system) works great but requires maintenance. Abrupt shut downs, excessive replications and database wraps can cause the DFS replication to stop working and if unmonitored, it can create a nightmare scenario where different versions of the same files are spread across multiple targets.

If DFSR is left unmanaged and unchecked in an error state, you reach the point of no return with the following error:

The DFS Replication service stopped replication on the folder with the following local path: [path]. This server has been disconnected from other partners for 409 days, which is longer than the time allowed by the MaxOfflineTimeInDays parameter (60). DFS Replication considers the data in this folder to be stale, and this server will not replicate the folder until this error is corrected.

To resume replication of this folder, use the DFS Management snap-in to remove this server from the replication group, and then add it back to the group. This causes the server to perform an initial synchronization task, which replaces the stale data with fresh data from other members of the replication group.

This error occurs when partners cannot replicate successfully for the maximum off line time allowed and the DFSR replication become stale. To correct this error, open the DFS Management console and navigate to the DFS namespace that is not replicating.

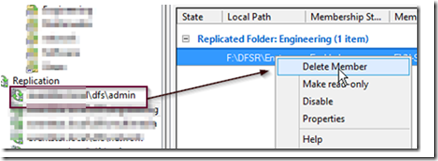

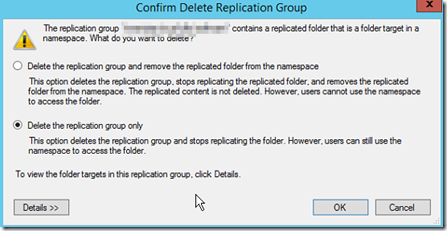

Right click on the member and delete the member when prompted and as shown below. Noe that the content will not be deleted.

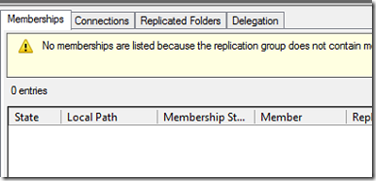

If there are multiple members that are not replicating, delete them all from the replication partnerships until there are no member servers in the namespace, as shown below.

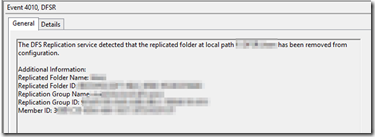

Wait until you see event ID’s 3006 & 4010 indicating that the replication group has been removed from the configuration.

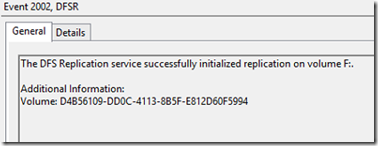

Finally, when event 2002 shown DFSR successful initialization, you are ready to add new folders.

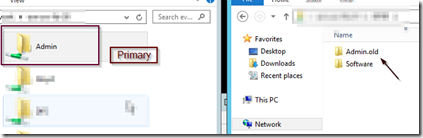

If you use a hub and spoke replication, you will have a server that is the primary server where the most current data is located. Identify this server because it will be the primary replication folder we will user.

If you use full mesh replication and users use a domain namespace, your current data will likely be spread across different target folders on several servers. If this is the case, identify the folder with the majority of current data, then find all the other target folders and rename them OLD (wait for the events above, so the folders are released, if not you may need to stop the DFSR service on the servers in order to rename the folders).

The reason for this is that you will no doubt get calls from users stating that the most current versions of their files have reverted back to prior versions. If this happens, you can simply locate the old target, pull the file out and save the day.

After renaming the old folders, create new replication folders across the target servers.

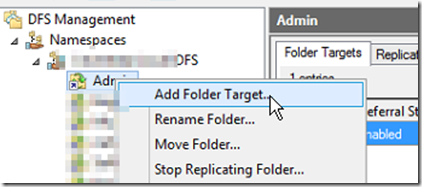

In DFS Management, locate the namespace and add the new folders as targets once again.

Once you have multiple targets with replication, your files should start to replicate from the primary server with the largest number of current files to the new target folders.

REMEMBER: Don’t discard your OLD folders, there may be more current data that you will need.

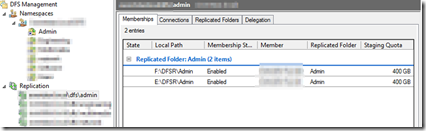

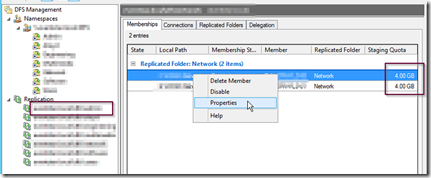

Finally, to avoid problems in the future, make sure you have allocated enough resources to the disk staging area as well as to the DFSR database. The defaults are only 4GB, so you may want to increase them. To do this, select the properties of each replication partner as shown below.

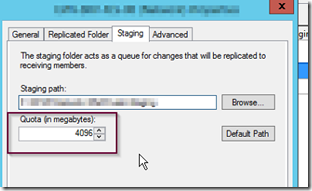

In the staging tab, increase the staging area depending on the size of the folders you are replicating.

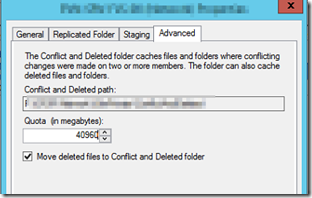

In the advanced tab, allocate resources to the database.

I generally set the staging area at the largest file size multiplied by 32. For information of best practice when choosing the staging are resource allocation, click here. After initial replication, you can keep an eye out for high and low watermark events that can help you tweak the settings.